Supported Frameworks & Applications

SAKURA™

Modules and Cards

SAKURA-II modules and cards are architected to run the latest vision and Generative AI models with market-leading energy efficiency and low latency. Whether you want to integrate AI functionality into an existing system or are creating new designs from scratch, these modules and cards provide a platform for your AI system development.

In conjunction with the MERA software and compiler framework, designers can use these platforms to quickly source pre-trained AI models, complete system designs, and perform post-training calibration and quantization.

These modules and cards can also be purchased in volume for direct insertion into existing M.2 or PCIe systems for immediate upgrading to add AI functionality.

SAKURA™

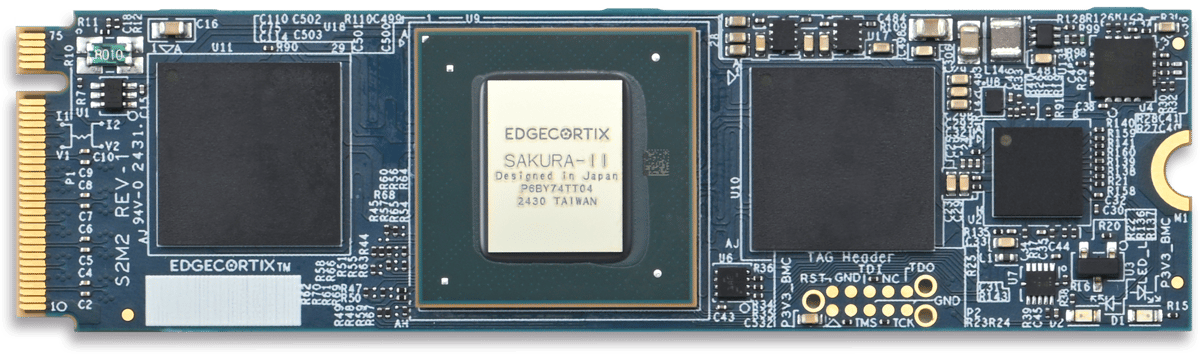

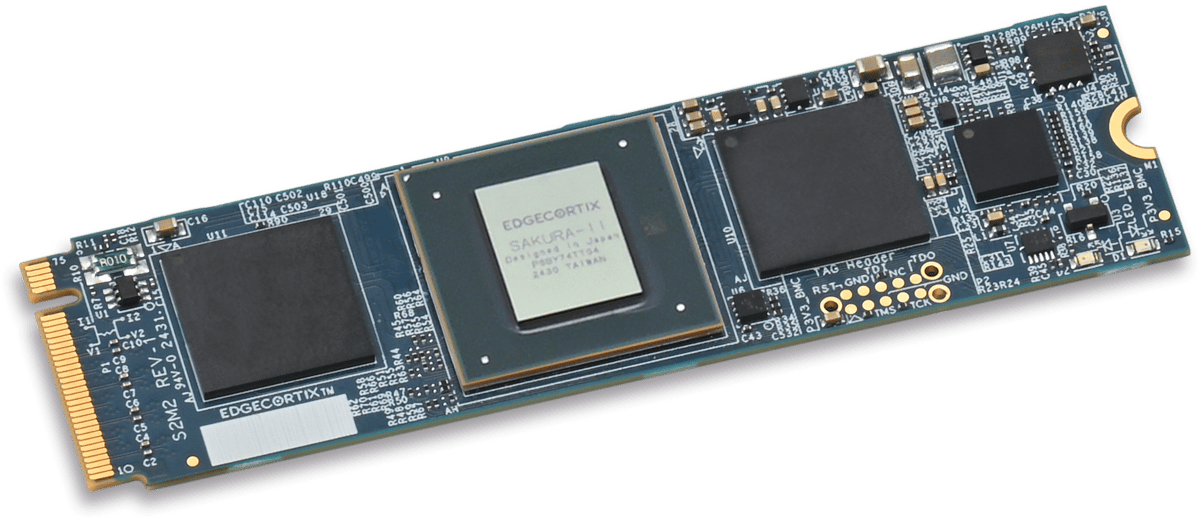

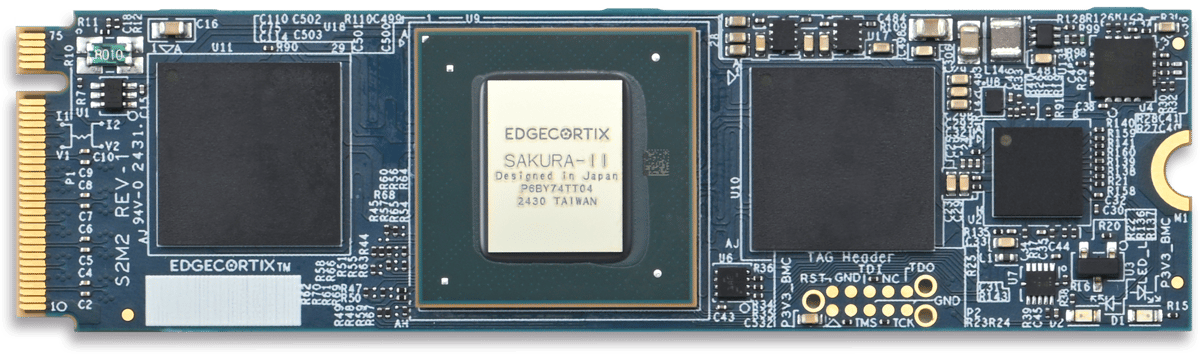

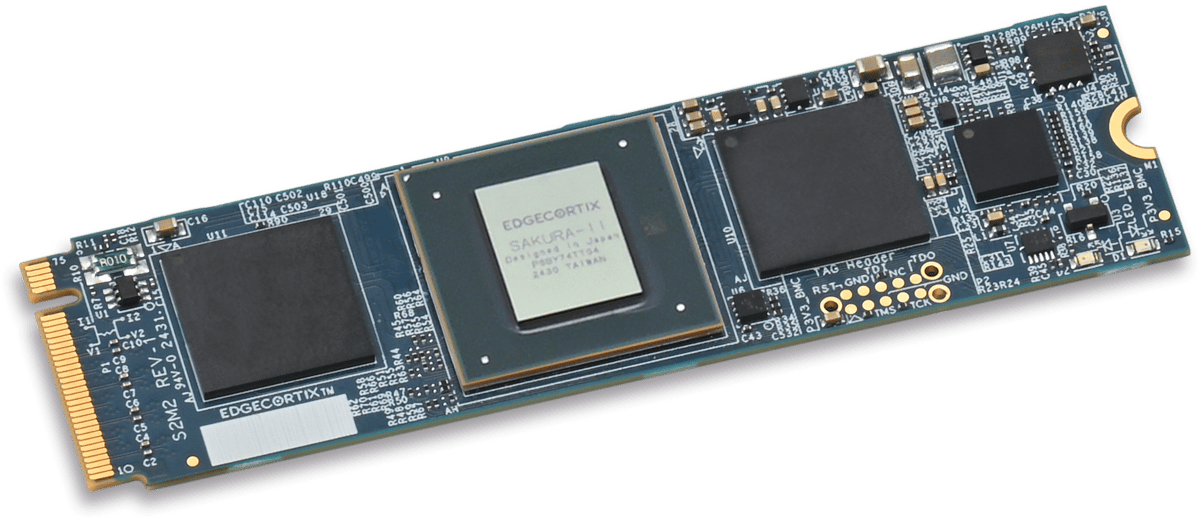

M.2 Module

The small form factor M.2 Module, featuring the SAKURA-II accelerator, is ideal for space-constrained designs and features significant onboard DRAM, making it ideal for Generative AI and other memory-intensive AI applications like Large Language Models (LLMs).

The M.2 module meets all M.2 specifications and can be inserted directly into M.2 slots for immediate evaluation. The 16GB M.2 is priced at $349, Inquire NOW!

The M.2 Module is the best choice for space-constrained and power-limited designs with easy integration into small edge AI systems!

M.2 Module Specifications

| Large DRAM Capacity: 16GB of LPDDR4 DRAM, enabling efficient processing of complex vision and Generative AI workloads |

Enhanced Memory Bandwidth: Up to 4x more DRAM bandwidth than competing AI accelerators, ensuring superior performance for LLMs and LVMs |

| 16GB (2 banks of 8GB LPDDR4) | Up to 68 GB/sec |

| High Performance: SAKURA-II edge AI accelerator running the latest AI models |

Low Power: Optimized for low power even during high utilization |

| 60 TOPS (INT8) 30 TFLOPS (BF16) |

10W (typical) |

| Form Factor: | Module Height: |

| M.2 Key M 2280 (22mm x 80mm) | D6 (3.2mm top, 1.5mm bottom) |

| Host Interface: | Temperature Range: |

| PCIe Gen 3.0 x4 | -20C to 85C |

| Large DRAM Capacity: Up to 16GB of LPDDR4 DRAM, enabling efficient processing of complex vision and Generative AI workloads |

| 8GB (2 banks of 4GB LPDDR4) 16GB (2 banks of 8GB LPDDR4) |

| Enhanced Memory Bandwidth: Up to 4x more DRAM bandwidth than competing AI accelerators, ensuring superior performance for LLMs and LVMs |

| Up to 68 GB/sec |

| High Performance: SAKURA-II edge AI accelerator running the latest AI models |

| 60 TOPS (INT8) 30 TFLOPS (BF16) |

| Low Power: Optimized for low power even during high utilization |

| 10W (typical) |

| Form Factor: |

| M.2 Key M 2280 (22mm x 80mm) |

| Module Height: |

| D6 (3.2mm top, 1.5mm bottom) |

| Host Interface: |

| PCIe Gen 3.0 x4 |

| Temperature Range: |

| -20C to 85C |

Get the details in the SAKURA-II M.2 Product Brief

SAKURA™

PCIe Cards

SAKURA-II PCIe Cards are high-performance, up to 120 TOPS, edge AI accelerators in the low profile, single slot PCIe form factor. These cards provide significant onboard DRAM, making them ideal for Generative AI and other memory-intensive AI applications like Large Language Models (LLMs).

These PCIe cards meet all PCIe specifications and can be inserted directly into PCIe slots for immediate evaluation. The cards fit comfortably into single slots, even with the attached passive or active heat sink. They are low profile, occupying only half a slot, giving designers more options for additional functionality on other PCIe cards in the system.

These PCIe cards are available in two options, with either one or two SAKURA-II AI Accelerators installed. The single PCIe card will satisfy many edge AI applications with up to 60 TOPS. The dual PCIe provides a second onboard SAKURA-II, DRAM and associated circuitry, and the two accelerators can be used as separate AI engines or can be combined for greater AI inferencing.

The single PCIe card is available for $499 and the dual PCIe version is priced at $799. Both versions are available for inquire NOW!

PCIe Cards are the best choice for most Edge AI designs using the industry standard PCIe form factor.

Upgrade to the dual PCIe card for the highest AI processing capability!

PCIe Card Specifications

| Large DRAM Capacity: Up to 32GB of LPDDR4 DRAM, enabling efficient processing of complex vision and Generative AI workloads |

Low Power: Optimized for low power while processing AI workloads with high utilization |

||

| Single SAKURA-II 16GB - 2 banks 8GB LPDDR4 |

Dual SAKURA-II 32GB - 4 banks 8GB LPDDR4 |

Single SAKURA-II 10W typical |

Dual SAKURA-II 20W typical |

| High Performance: SAKURA-II edge AI accelerator running the latest AI models |

Host Interface: Separate x8 interfaces for each SAKURA-II device |

||

| Single SAKURA-II 60 TOPS (INT8) 30 TFLOPS (BF16) |

Dual SAKURA-II 120 TOPS (INT8) 60 TFLOPS (BF16) |

Single SAKURA-II PCIe Gen 3.0 x8 |

Dual SAKURA-II PCIe Gen 3.0 x8/x8 (bifurcated) |

| Enhanced Memory Bandwidth: Up to 4x more DRAM bandwidth than competing AI accelerators, ensuring superior performance for LLMs and LVMs |

Form Factor: PCIe cards fit comfortably into a single slot providing room for additional system functionality |

||

| Up to 68 GB/sec | PCIe low profile, single slot | ||

| Included Hardware: | Temperature Range: | ||

| Half and full-height brackets Active or passive heat sink |

-20C to 85C | ||

| Large DRAM Capacity: Up to 32GB of LPDDR4 DRAM, enabling efficient processing of complex vision and Generative AI workloads |

| Single SAKURA-II 16GB - 2 banks 8GB LPDDR4 Dual SAKURA-II 32GB - 4 banks 8GB LPDDR4 |

| High Performance: SAKURA-II edge AI accelerator running the latest AI models |

| Single SAKURA-II 60 TOPS (INT8) 30 TFLOPS (BF16) Dual SAKURA-II 120 TOPS (INT8) 60 TFLOPS (BF16) |

| Low Power: Optimized for low power while processing AI workloads with high utilization |

| Single SAKURA-II 10W typical Dual SAKURA-II 20W typical |

| Host Interface: Separate x8 interfaces for each SAKURA-II device |

| Single SAKURA-II PCIe Gen 3.0 x8 Dual SAKURA-II PCIe Gen 3.0 x8/x8 (bifurcated) |

| Enhanced Memory Bandwidth: Up to 4x more DRAM bandwidth than competing AI accelerators, ensuring superior performance for LLMs and LVMs |

| Up to 68 GB/sec |

| Form Factor: PCIe cards fit comfortably into a single slot providing room for additional system functionality |

| PCIe low profile, single slot |

| Included Hardware: |

| Half and full-height brackets Active or passive heat sink |

| Temperature Range: |

| -20C to 85C |

Get the details in the SAKURA-II PCIe Product Brief

Explore our Complete Edge AI Platform

Unique Software

Proprietary Architecture

Efficient Hardware

We recognized immediately the value of adding the MERA compiler and associated tool set to the RZ/V MPU series, as we expect many of our customers to implement application software including AI technology. As we drive innovation to meet our customer's needs, we are collaborating with EdgeCortix to rapidly provide our customers with robust, high-performance and flexible AI-inference solutions. The EdgeCortix team has been terrific, and we are excited by the future opportunities and possibilities for this ongoing relationship."

Given the tectonic shift in information processing at the edge, companies are now seeking near cloud level performance where data curation and AI driven decision making can happen together. Due to this shift, the market opportunity for the EdgeCortix solutions set is massive, driven by the practical business need across multiple sectors which require both low power and cost-efficient intelligent solutions. Given the exponential global growth in both data and devices, I am eager to support EdgeCortix in their endeavor to transform the edge AI market with an industry-leading IP portfolio that can deliver performance with orders of magnitude better energy efficiency and a lower total cost of ownership than existing solutions."

Improving the performance and the energy efficiency of our network infrastructure is a major challenge for the future. Our expectation of EdgeCortix is to be a partner who can provide both the IP and expertise that is needed to tackle these challenges simultaneously."

With the unprecedented growth of AI/Machine learning workloads across industries, the solution we're delivering with leading IP provider EdgeCortix complements BittWare's Intel Agilex FPGA-based product portfolio. Our customers have been searching for this level of AI inferencing solution to increase performance while lowering risk and cost across a multitude of business needs both today and in the future."

EdgeCortix is in a truly unique market position. Beyond simply taking advantage of the massive need and growth opportunity in leveraging AI across many business key sectors, it’s the business strategy with respect to how they develop their solutions for their go-to-market that will be the great differentiator. In my experience, most technology companies focus very myopically, on delivering great code or perhaps semiconductor design. EdgeCortix’s secret sauce is in how they’ve co-developed their IP, applying equal importance to both the software IP and the chip design, creating a symbiotic software-centric hardware ecosystem, this sets EdgeCortix apart in the marketplace.”

We recognized immediately the value of adding the MERA compiler and associated tool set to the RZ/V MPU series, as we expect many of our customers to implement application software including AI technology. As we drive innovation to meet our customer's needs, we are collaborating with EdgeCortix to rapidly provide our customers with robust, high-performance and flexible AI-inference solutions. The EdgeCortix team has been terrific, and we are excited by the future opportunities and possibilities for this ongoing relationship."

Given the tectonic shift in information processing at the edge, companies are now seeking near cloud level performance where data curation and AI driven decision making can happen together. Due to this shift, the market opportunity for the EdgeCortix solutions set is massive, driven by the practical business need across multiple sectors which require both low power and cost-efficient intelligent solutions. Given the exponential global growth in both data and devices, I am eager to support EdgeCortix in their endeavor to transform the edge AI market with an industry-leading IP portfolio that can deliver performance with orders of magnitude better energy efficiency and a lower total cost of ownership than existing solutions."

SAKURA-II M.2 Modules and PCIe Cards

EdgeCortix SAKURA-II can be easily integrated into a host system for software development and AI model inference tasks.

Order an M.2 Module or a PCIe Card and get started today!